Leading the Industry with AI Brand Report

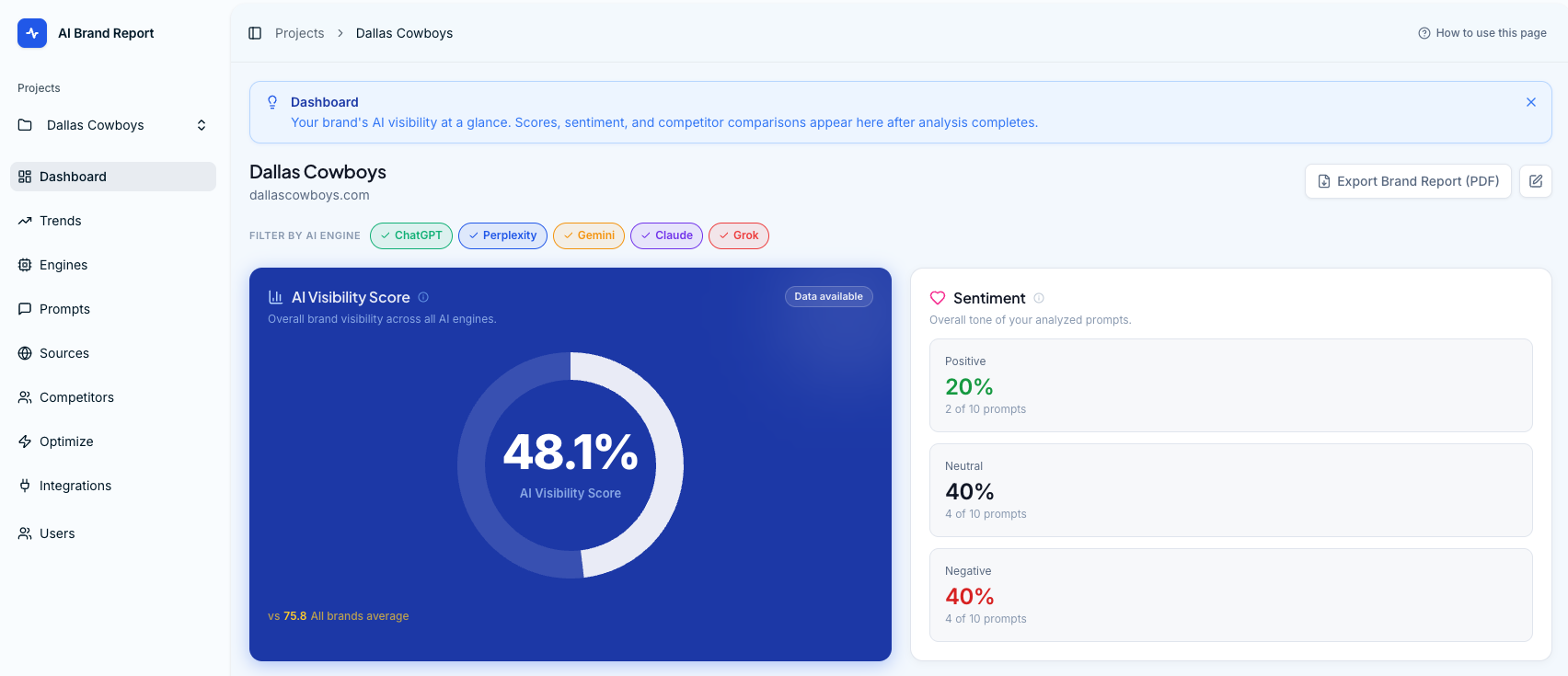

When ChatGPT, Gemini, Claude, Grok, and Perplexity began shaping how prospects discover and evaluate brands, our agency clients started asking the same question: "What is AI saying about us?" Traditional SEO tools couldn't answer it. Sentiment platforms couldn't either. So in 2025, One Cloud Media's engineering team set out to build the system we needed, a platform that could query the major AI engines at scale, normalize the responses, and translate raw output into a prioritized action plan.

What started as an internal tool for our agency clients quickly became something more. By 2026, after months of refinement and proven results across our client base, we released AI Brand Report as a standalone SaaS product, now used by agencies, in-house marketing teams, and enterprise brands to measure and improve how they appear in AI-generated answers.

The Challenge

Generative AI has become a primary discovery channel. Buyers ask ChatGPT for recommendations before they ever open a search tab, and the answer they receive, whether accurate, outdated, or missing entirely, shapes the decision. But measuring AI brand presence is fundamentally different from measuring search rankings. There is no SERP to scrape, no keyword position to track, and no single source of truth. Each engine returns slightly different answers, framed by training data, live retrieval, and conversational context that all shift over time.

Our agency teams were running brand checks manually, opening private sessions across five different AI platforms, prompting them in dozens of variations, copying responses into spreadsheets, and trying to assemble a coherent picture. It was slow, inconsistent, and impossible to scale across multiple clients. We needed a system that could run thousands of queries across every major AI engine, capture and structure the results, surface what mattered, and tell our team (and eventually our clients) exactly what to do about it.

The Solution: AI Orchestration at Scale

The core engineering challenge wasn't the user interface. It was building an orchestration layer that could reliably query five different AI engines, each with its own API contracts, rate limits, response formats, and quirks, and normalize their output into a single, comparable dataset. That orchestration layer became the heart of the platform.

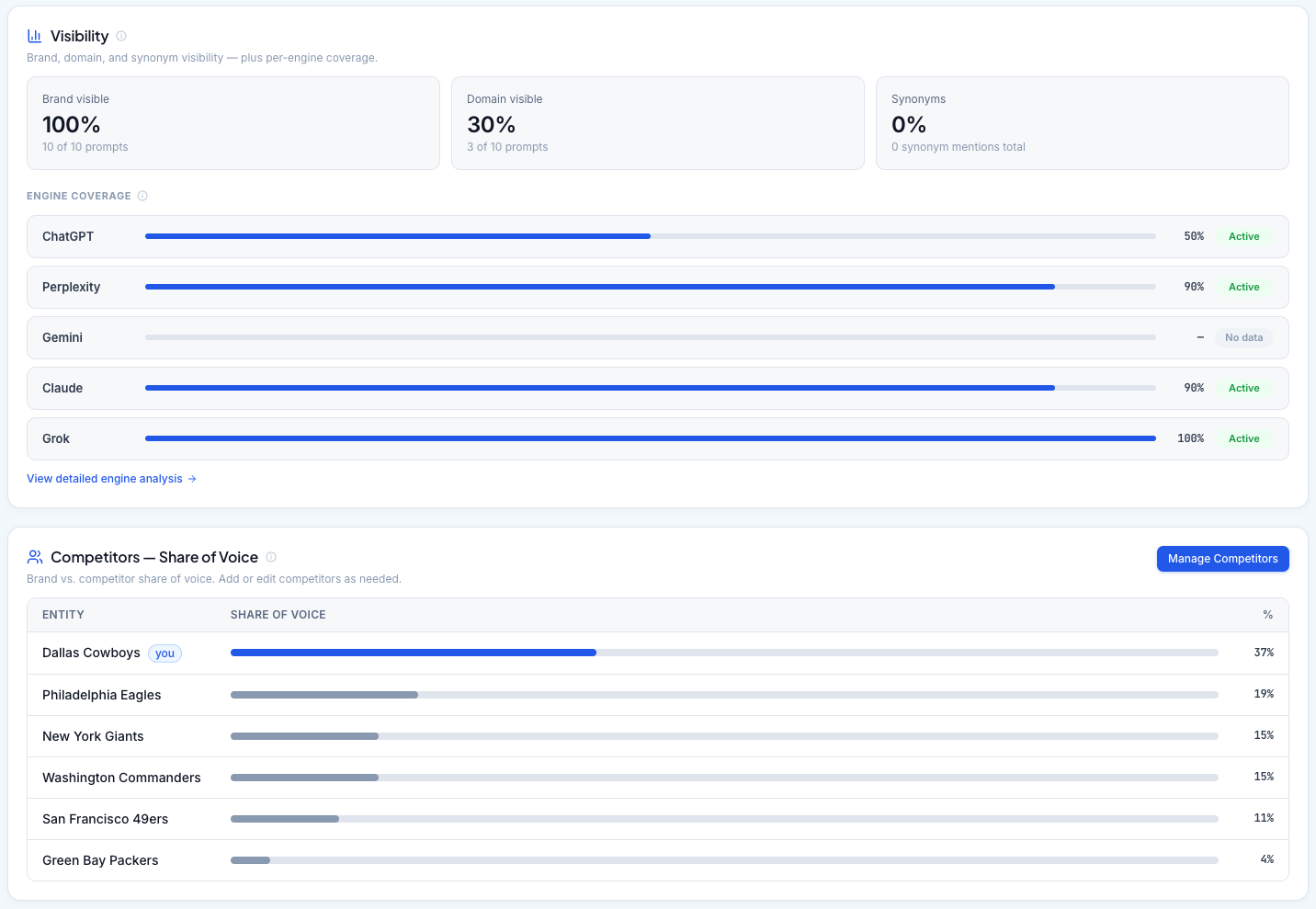

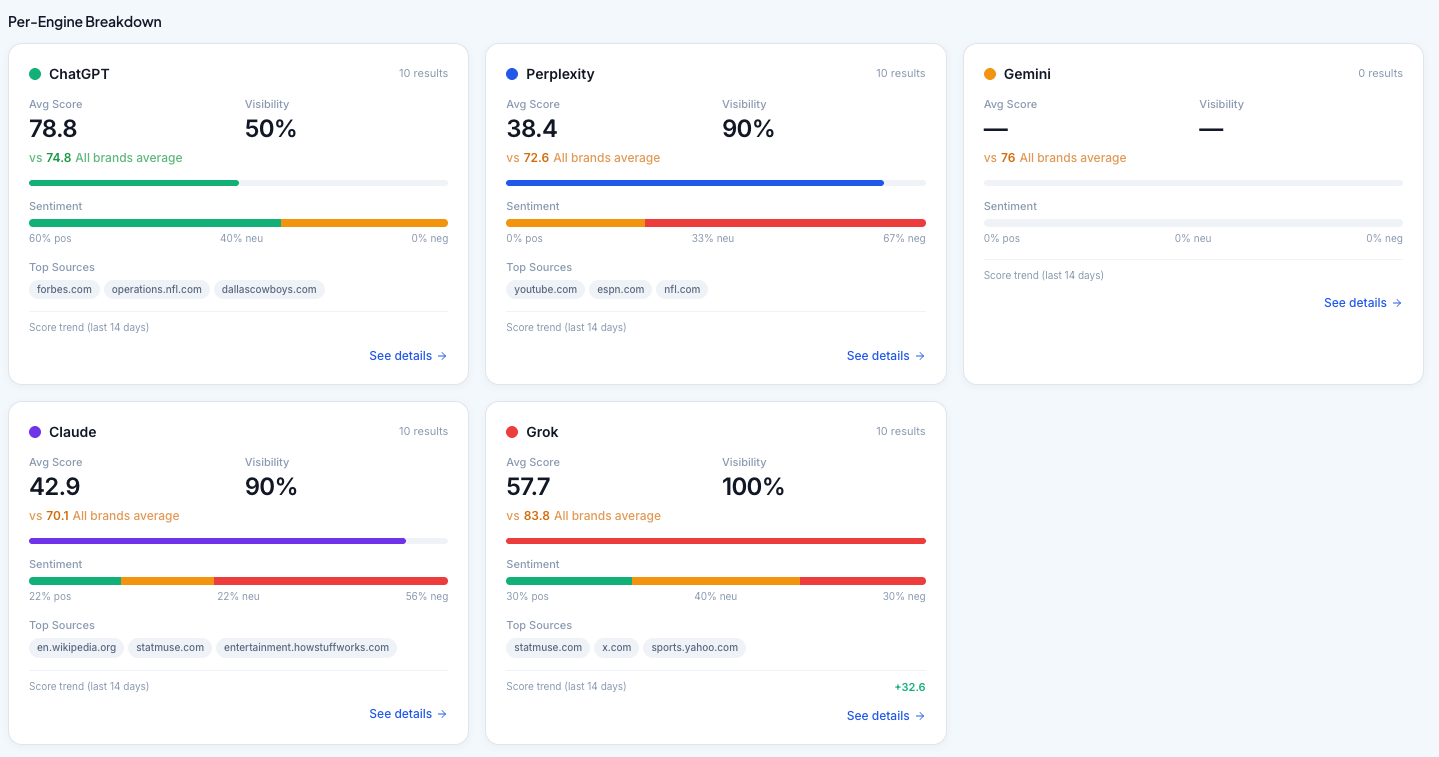

- Multi-Engine Query Engine: We built a unified interface that runs prompts in parallel across ChatGPT, Gemini, Claude, Grok, and Perplexity. Each engine is queried through its own adapter, with retry logic, rate-limit handling, and response validation tuned to that engine's behavior. Thousands of queries run against each brand's prompt set to build a statistically meaningful baseline rather than a single-shot snapshot.

- Response Normalization and Entity Extraction: Raw AI responses are messy. Citations appear in different formats, brand mentions are inconsistent, and sentiment is implicit. We engineered a parsing pipeline that extracts brand mentions, identifies competitor co-occurrences, captures cited URLs, and scores sentiment in a consistent way across all five engines, so a mention in Claude can be compared like-for-like with a mention in ChatGPT.

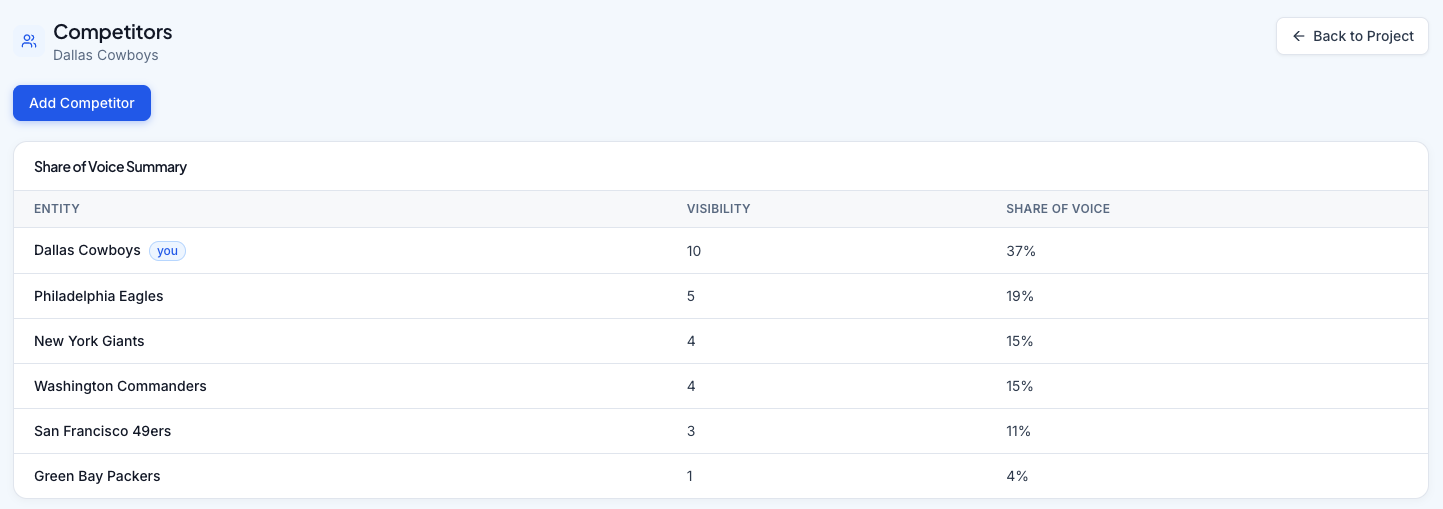

- Visibility, Sentiment, and Citation Scoring: The platform translates thousands of normalized data points into a small set of metrics that actually mean something: visibility share against named competitors, sentiment trend, citation source authority, and prompt-level coverage gaps. The scoring model is calibrated against real client outcomes, not theoretical weights.

- The Optimization Hub: Identifying problems is only half the job. The platform's Optimization Hub uses our findings to generate ready-to-publish content plans, the specific articles, FAQs, and structured content that, once published and indexed, change what the AI engines say next time. This is the feature our agency teams said made the platform indispensable.

- Trending Reports and Proof of Impact: Because AI responses shift constantly, point-in-time reports aren't enough. We built a trending engine that tracks score movement, sentiment shifts, and competitive position over 30, 90, and 365-day windows, so teams can prove the strategy is working.

White-Label Reporting for Agencies: Once we knew this would become a product for other agencies, we built exportable, brand-customizable PDF reports designed for client presentations and quarterly reviews, turning the platform's output into a polished deliverable in a click.

From Internal Tool to SaaS Product

The transition from agency-internal tooling to commercial SaaS was deliberate. Every architectural decision in the second half of 2025 was made with multi-tenancy, role-based access, and team collaboration in mind. Agency Team and Client Guest roles, brand-level permissioning, and team seat management were built before we ever charged a dollar. By the time we opened public registration in 2026, the platform had already been pressure-tested against real agency workflows, real client questions, and real reporting deadlines.

The product launched with four pricing tiers (Starter, Professional, Agency, and Enterprise) covering everything from a single brand at $49.95/mo to unlimited brands with API access and dedicated success management at the Enterprise level. Integrations with Google Search Console and Google Analytics tie AI visibility data to real referral traffic, closing the loop between "AI says this about you" and "this is what AI is sending to your website."

The Results

AI Brand Report now serves agencies, in-house marketing teams, and enterprise brands who need a defensible answer to the question their leadership keeps asking: "How are we showing up in AI?"

- For our agency clients, what used to be a multi-day manual audit is now a baseline report generated in minutes, with a prioritized action plan attached.

- For other agencies, the white-label tier turned a tooling problem into a productized service offering they can resell.

- For in-house teams, the trending and integration features have made AI visibility a reportable, defensible KPI, not a gut-feel concern.

Why One Cloud Media?

This project is the clearest expression of how we work. We don't build software for the sake of building software. We build it when the problem in front of us has no good answer in the market. AI Brand Report exists because our agency teams hit a real wall, and our engineering team had the depth to build through it. The fact that it became a commercial product is a consequence of solving the problem properly, not the goal we started with.

We architect digital systems for clients across healthcare, education, government, manufacturing, and commerce. We also build our own. AI Brand Report is proof that the same team you'd hire for a Drupal migration or a custom platform build is the team capable of shipping production-grade SaaS, and that the depth of engineering craft we bring to client work is the same craft we apply when we build for ourselves.